The Coherency Problem — When AI Says One Thing and Does Another

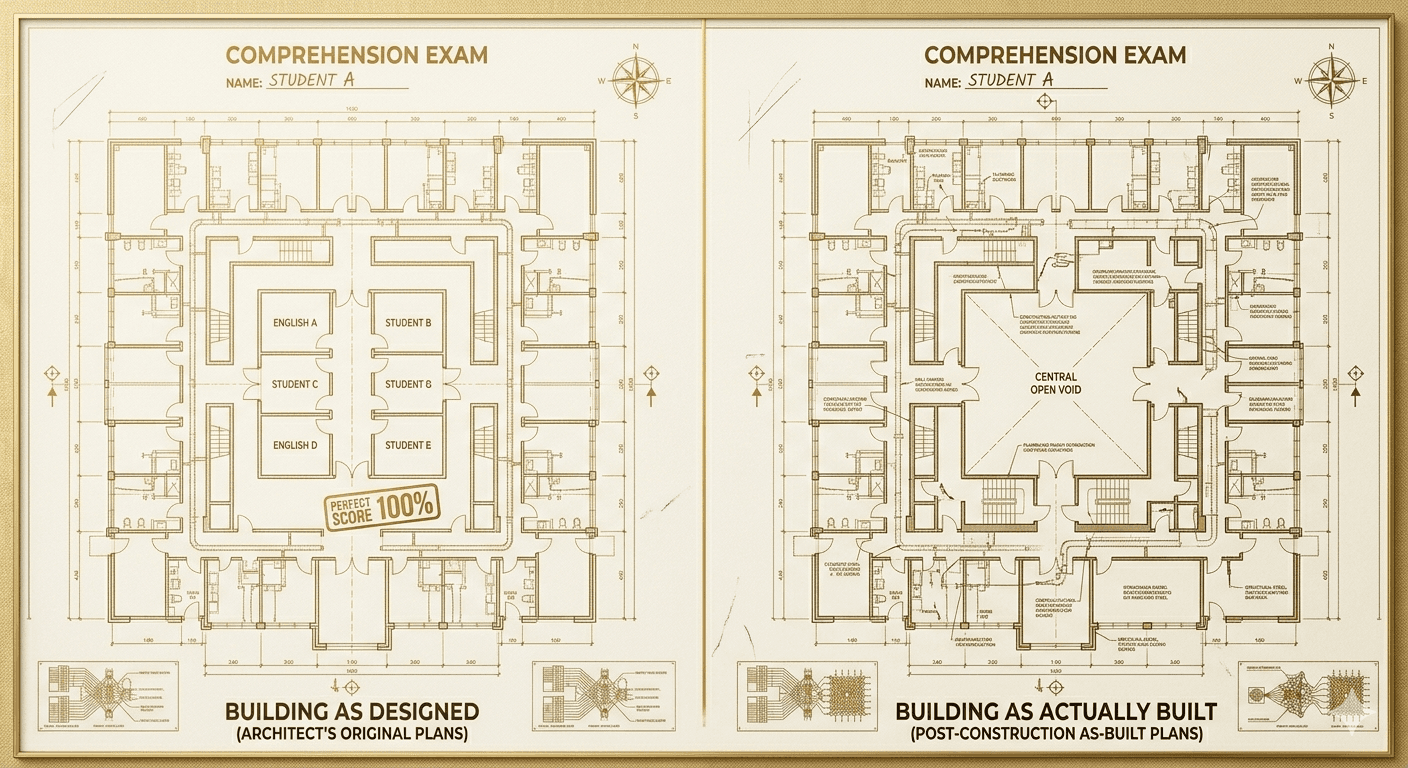

Your AI assistant confidently describes your architecture. Half of what it says is wrong. Not because it lied — because it could not tell the difference between what the documentation claims and what the code actually does.

Your AI assistant describes your project’s authentication system. It says the login flow uses JWT tokens with a 24-hour expiry. It sounds confident. The description is well-structured. There’s just one problem: you switched to session-based auth three weeks ago. The AI isn’t lying. It read the architecture document. The architecture document is wrong — nobody updated it after the change.

This is the coherency problem. It’s what happens when a system can’t tell the difference between what it says and what it does. And it’s not just an AI problem. It might be the most familiar problem in public life.

The Trust Gap

Coherency, at its core, is the alignment between claims and reality. When those two things match, you get trust. When they diverge, you get something that looks functional but isn’t — and the divergence is invisible until something breaks.

We see this everywhere. A company’s marketing says they value sustainability. Their supply chain tells a different story. A political platform promises fiscal responsibility. The budget tells a different story. A partner says they’re committed. Their behavior tells a different story.

The gap between what is said and what is done is not always dishonesty. Often it’s drift — the slow, invisible divergence between a statement made in good faith and a reality that has moved on without updating the statement. Nobody decided to be incoherent. The world changed and the claims didn’t.

Software has this same problem, and AI makes it worse.

Documentation Drift

In any codebase that’s been alive for more than a few months, the documentation and the code will have drifted apart. A README says the project uses PostgreSQL; someone switched to SQLite for local dev and forgot to note it. An API doc describes five endpoints; two were deprecated and a sixth was added. An architecture diagram shows three microservices; a fourth was spun up during a sprint and never diagrammed.

For human developers, this is annoying but survivable. You learn to distrust documentation and read the code instead. You carry institutional knowledge that tells you which docs are current and which are artifacts.

AI agents can’t do this. They treat every document they’re given as equally true. If the architecture doc says JWT and the code says sessions, the agent has no way to know which one is current. It picks one — usually the document, because documents are structured and code requires interpretation — and proceeds with full confidence on false information.

This is how you get AI-generated regressions: the agent faithfully implements a specification that no longer matches reality.

The Accountability Problem

The deeper issue is accountability. In any system — software, government, business, relationships — coherency requires a mechanism for detecting when claims and reality have diverged, and a process for correcting the divergence.

Democracies have this (imperfectly) through free press, independent judiciary, and elections. Businesses have this (imperfectly) through audits, financial reporting, and market competition. Relationships have this (imperfectly) through communication and, eventually, consequences.

Most software projects have no coherency mechanism at all. Documentation is written once and forgotten. There is no system that compares what the docs say against what the code does. There is no alert when they drift apart. There is no forced reconciliation before the next deployment.

For human-only teams, you get away with this because people compensate. For AI-assisted teams, you don’t. The agent trusts the docs implicitly. If the docs are wrong, the agent is wrong — and it will be wrong with absolute confidence.

What Coherency Enforcement Looks Like

Solving the coherency problem requires treating it as an engineering discipline, not a best practice. Specifically:

- Automated drift detection — tooling that continuously compares documentation claims against live code and flags divergences.

- Truth hierarchies — when two sources conflict, the system needs a defined rule for which one wins. This has to be explicit, not implicit.

- Blocking enforcement — coherency violations should block deployment, not just generate warnings. Just like a financial audit that blocks a filing until the numbers reconcile.

- Measurable coherency scores — a quantified metric that tells you how aligned your documentation is with your code at any given moment. Not a feeling. A number.

Why This Matters Beyond Code

The coherency problem is ultimately a trust problem — and trust is the foundation of every functioning system. When a codebase is incoherent, AI agents produce unreliable output. When institutions are incoherent, citizens lose faith. When organizations are incoherent, employees disengage.

The pattern is universal: systems that cannot verify their own honesty eventually fail. Not because anyone chose to be dishonest, but because drift is the natural state of every complex system, and without active enforcement, claims and reality will always diverge.

The interesting question isn’t whether drift happens. It’s whether you have a mechanism to detect it, measure it, and correct it before it compounds into a crisis of trust.

In software, that mechanism is buildable today. The tools exist. The patterns are clear. The question is whether teams treat coherency as a first-class engineering concern or continue to hope that their docs are close enough.

For AI-assisted development, “close enough” doesn’t work. The agent takes you at your word. You’d better make sure your word is accurate.

Fourth in a series examining the real problems people face with AI — and the structural patterns that connect them to trust in every domain.

You’re reading 4 of 8.

Get notified when the next article drops. No marketing — one email per new article, unsubscribe any time.