The Real AI Test — Measuring Understanding, Not Output

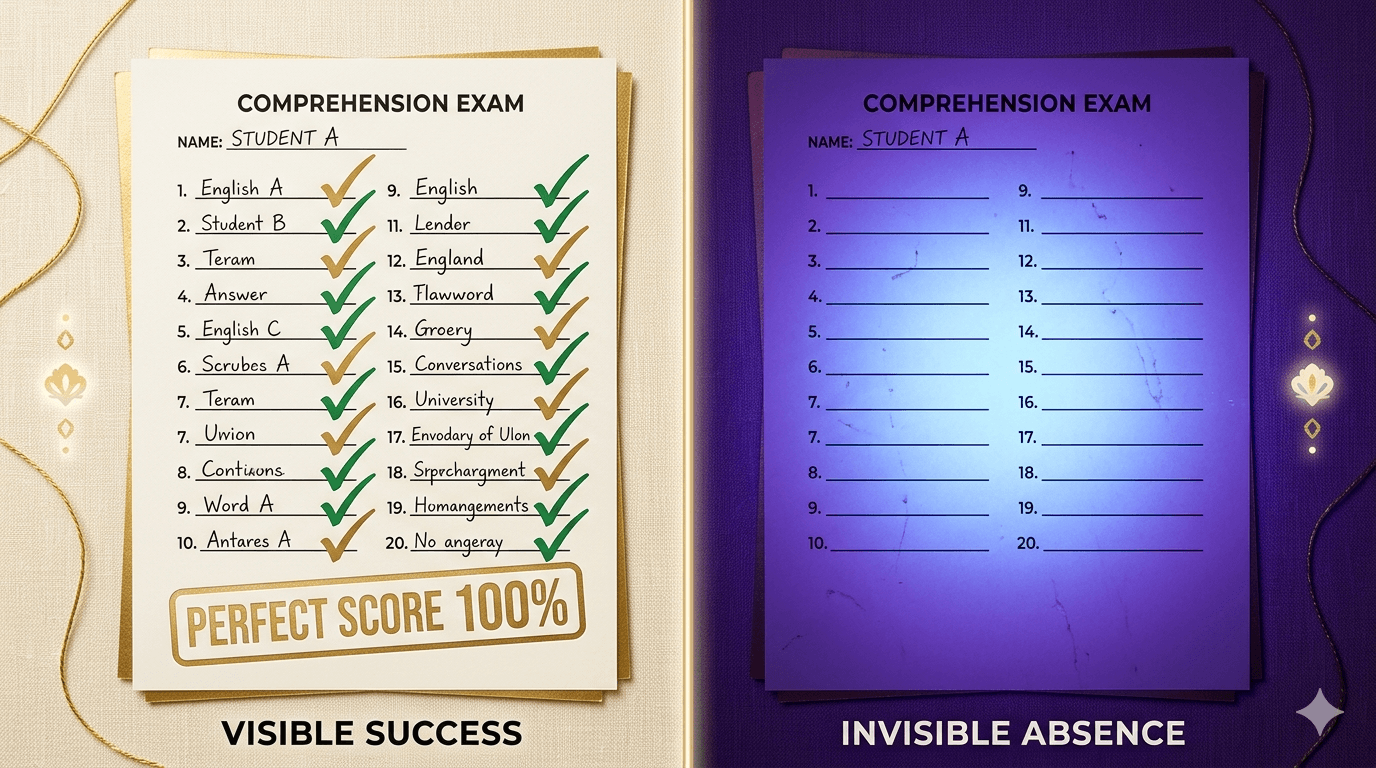

We test AI the same way we test students: can you produce the right answer? But the right answer does not mean you understood the question. What would it look like to actually measure whether an AI understood what you asked?

Ask an AI to write a function that sorts a list, and it will. Ask it to build an inventory system with twelve overlapping business rules buried across four paragraphs of specification, and watch what happens. It will produce something that looks right. It will compile. It might even pass simple tests. But the subtle rules — the ones that require actually understanding the spec, not just pattern-matching against it — those will be wrong.

We have a measurement problem in AI. And it’s the same measurement problem we have everywhere else.

The Education Parallel

For decades, education researchers have argued that standardized testing measures the wrong thing. A student who memorizes multiplication tables will ace a math test. A student who understands why multiplication works might struggle with the same test if the format is unfamiliar — even though their understanding is deeper.

We test recall because recall is easy to measure. Understanding is hard to measure. So we build systems that optimize for the measurable thing and then act surprised when graduates can pass tests but can’t solve problems.

AI benchmarks work exactly the same way. HumanEval asks a model to write a function it has likely seen thousands of times in training data. MBPP tests pattern completion. These benchmarks measure whether the model can produce a known answer to a known question. They don’t measure whether it understood the question — because understanding only becomes visible when the question contains ambiguity, contradiction, or hidden complexity.

Confidence Without Comprehension

This is the gap that matters in practice. An AI model that scores 95% on coding benchmarks will still fail on real-world tasks that require holding multiple constraints in working memory, resolving conflicts between them, and making judgment calls about edge cases the specification didn’t explicitly address.

We see the same pattern in hiring. A candidate with a perfect resume and polished interview answers gets the job. Three months later, it becomes clear they can’t handle ambiguity, can’t adapt when requirements change, and freeze when a problem doesn’t match a familiar pattern. The interview tested presentation. It didn’t test comprehension.

Social media amplifies this further. The most confident voice gets the most engagement. Nuance is punished. Certainty is rewarded. We’ve built information systems that optimize for the appearance of understanding — and AI benchmarks are doing the same thing to machine intelligence.

What a Real Test Would Look Like

If you wanted to actually measure whether an AI agent understands what you asked, you’d need to design specifications that contain deliberate cognitive traps — places where pattern matching produces the wrong answer and only genuine comprehension produces the right one.

For example:

- Conflicting merge semantics — the spec says “merge these records” but different fields require different merge strategies. Does the agent notice, or does it apply one strategy uniformly?

- Precision traps — the spec involves calculations where floating-point arithmetic will silently produce wrong answers. Does the agent handle this, or does it trust the math?

- Boundary ambiguity — the spec says “between 10 and 20.” Is that inclusive or exclusive? The right answer is buried in a different section. Does the agent find it?

- Atomicity requirements — a batch operation must be all-or-nothing, but the spec mentions this once in passing. Does the agent build a transaction or a loop?

These aren’t trick questions. They’re exactly the kind of complexity that exists in every real specification. The difference between a good developer and a mediocre one is whether they catch these. The difference between a useful AI and a dangerous one is the same.

Measuring the Right Thing

The problem with measuring output is that output can be right for the wrong reasons. A model might produce correct code because the pattern appeared in its training data, not because it reasoned through the constraints. Change the pattern slightly and the output breaks — revealing that the model was matching, not understanding.

A genuine understanding test would need to:

- Isolate the agent variable — no follow-up questions, no hints, no iterative refinement. One specification, one attempt, one output.

- Score on traps, not on output — the overall output might look fine. The question is whether the subtle constraints were honored.

- Use deterministic verification — automated test suites that check specific behaviors. Either the atomicity constraint was implemented or it wasn’t. Understanding is binary in execution, even when it’s fuzzy in conversation.

What This Changes

If we measured AI by comprehension rather than output, we’d start making different decisions about which tools to use and how to use them. We’d stop choosing models based on benchmark leaderboards and start choosing them based on how they handle ambiguity.

More importantly, we’d recognize something that education reformers have been saying for years: the test shapes the behavior. When you test memorization, you get memorizers. When you test understanding, you get thinkers. The same applies to AI. When we benchmark pattern matching, we get pattern matchers. When we benchmark comprehension, we’ll get something closer to genuine capability.

The tools to do this aren’t hypothetical. Designing specifications with cognitive traps, building deterministic test harnesses, scoring on constraint adherence rather than output appearance — this is engineering, not research. It’s work that can be done today, with current models, using current tools.

We just have to decide to measure the right thing.

Third in a series examining the real problems people face with AI — and why the answer starts with asking better questions.

You’re reading 3 of 8.

Get notified when the next article drops. No marketing — one email per new article, unsubscribe any time.